Beyond Coding

I love coding. I grew up coding and worked for a while as a programmer. Skills in coding and computational thinking are highly relevant for participating in our increasingly automated world.

Beyond mere coding a revolution in computing is maturing: neural networks.

Neural networks consist of simulated neurons rather than classic algorithms, and they aren't programmed so much as trained. You create a bunch of neurons and connect them, feed the network lots of examples & examine the output, repeatedly tweaking the neuronal connections and firing patterns, thereby evolving a sort of simulated brain that performs a function. How it performs the function remains translucent. The how resides in what is termed the "hidden layer" of the network.

Neural networks are being used in the mainstream right now:

I created a neural network with free "MemBrain" software

- Facebook has deployed a neural network called "DeepFace" which recognises human faces in images.

- Nuance's speech recognition engine used in Apple's Siri also uses neural networks - intriguingly, thanks to exploitation of the graphical processing units that have developed incredibly power thanks to the popularity of video games.

- The Google Translate app runs a neural network locally on your phone.

- MasterCard offers a neural network service to business customers using IBM's "Watson Analytics". More on this below.

There's also plenty in the immediate pipeline:

- Google is embedding an optimised neural network to help its self-driving cars spot pedestrians.

- IBM is producing "TrueNorth" computer chips whose physical structure is neural and research into the closely related "memrister" technology is growing exponentially.

- Facebook is successfully training neural networks to do all kinds of things, such as physics, mapping and language processing.

If you're curious to explore neural networks, there are open access tools to have a play.

For instance, "Membrain" allows you to create your own neural network and train it with data.

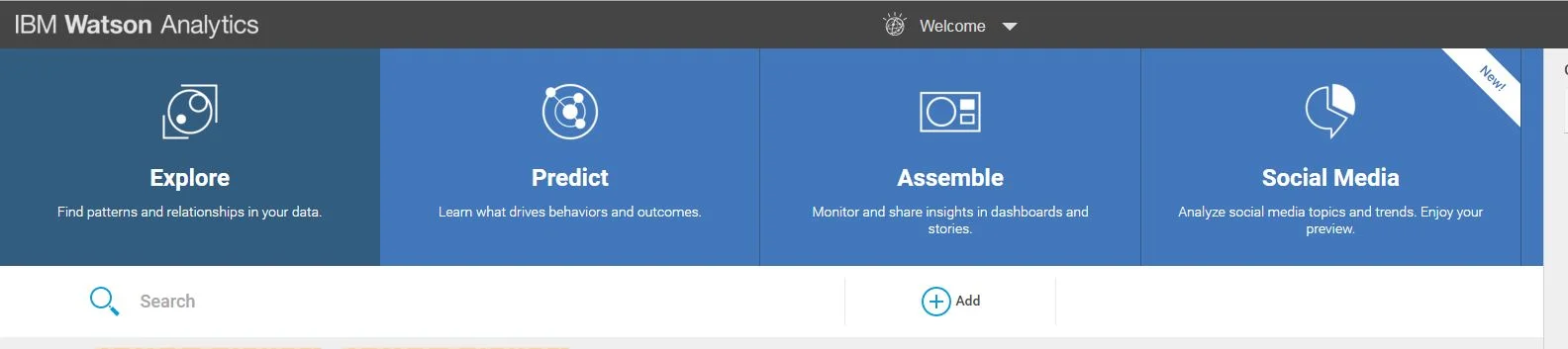

Also, IBM's "Watson Analytics" is free to use online. You can upload datasets and see how it makes sense of them, often offering up questions or connections for further exploration.

What to do with all this? As for the teaching of coding and computational thinking - bring it on!

How can we go further? Insight into the power of repeated and branching algorithms doesn't begin to prepare us for what is essentially distributed extended cognition. Incredibly sophisticated artificial intelligence, including neural network computing, is embedded in our lives and progressing in rapid cascades.

How might we develop critical literacy regarding high-order artificial intelligence?

For teachers (see this thought-piece by Pearson for what's in store, and note that in Australia, NAPLAN essays will be marked by AI in 2017).

For young people - a chance to grapple with the ontological, teleological, philosophical and political questions that extended cognition raises at the most practical levels: to understand that if they're on Facebook, its AI forms a functional part of their attention discrimination system; that when the Google search, they are teaching Google as much as Google is teaching them. I wonder how to do this with younger students in particular? I speculate here that a degree of personification might just hold some promise. Dennett's "intentional stance" could be made to work in simple. quasi-metaphoric framing for children of what technology is and what it does. Quick, someone write a children's book like this! (Has someone already!?)

The Australian ICT general capability just doesn't use language to grapple with questions of third party machine agency: it's not just about "using" it's about extending into and being subsumed by. The into and by is an ecosystem of non-human agents, each with something like intent or agenda and increasingly consisting of hybrids that include "hidden layers" of neural networks whose functions were evolved rather than algorithmically programmed.

It's all getting rather complex.